A rules-based quantitative approach to global equity markets. Built to compound across cycles, not chase them.

Results on this page are updated continuously as the strategy runs forward. Last updated: 4 May 2026.

MFAM's Quantitative Leveraged ETF Strategy is a fully rules-based trading system combining three complementary quantitative engines, trend-following on the long side, mean reversion, and trend-following on the short side, across a basket of leveraged US and Chinese equity ETFs. Every entry, exit, and position size is driven by mathematical rules. There is no discretionary override.

Built on the concepts taught in our free trading course

The trend-following, mean-reversion, volatility-adjusted stops and regime-filter frameworks that drive this strategy are the same concepts taught step by step in the MFAM free trading course. If you want to understand how and why this system works, the course walks through the underlying mechanics in plain language. Access the free trading course here.

The short version

- 52.6 per cent annualised return versus 14.8 per cent for the S&P 500, May 2017 to April 2026, all results net of an all-in 30 basis-point per-fill charge that bundles MFAM commissions, broker fees and execution slippage. No separate management or performance fee.

- Maximum drawdown -29.5 per cent versus -33.7 per cent for the benchmark. Higher return at lower drawdown is the alpha signature of the strategy.

- Calmar ratio 1.78 versus 0.44 for SPY. Roughly three times the return per unit of worst-case drawdown.

- Thirteen leveraged ETFs across three engines — trend-following long (TQQQ, SOXL, YINN, AIBU, LABU), mean-reversion (DFEN, ERX, JNUG) and trend-following short (SQQQ, SOXS, YANG, AIBD, LABD). The engines rotate leadership across regimes so the strategy is rarely flat across all three at once and rarely fully exposed across all three at once.

- Cash account at Interactive Brokers, no broker margin. The investor holds custody of their own account. MFAM never holds investor funds.

- Non-discretionary general advice. Every trade signal requires the investor's explicit yes before the MFAM adviser places the order.

- Minimum investment AUD 20,000.

The full detail — methodology, the alpha-versus-beta split, engine design, the drawdown framework, the curve-fitting tests and the access mechanics — is set out in the sections below.

Table of Contents

- 1 Built on the concepts taught in our free trading course

- 2 The short version

- 3 Returns shown are already net of strategy fees

- 4 Context for this period

- 5 Beta is market exposure

- 6 Alpha is return that does not come from market exposure

- 7 How this strategy generates alpha

- 8 Historical Drawdown Events in Context

- 9 The honest caveat

- 10 Frequent but Bounded — the Diversification Fingerprint

- 11 Trend-Long Engine

- 12 Mean-Reversion Engine

- 13 Trend-Short Engine

- 14 Three Engines, Three Trade Profiles

- 15 How the Three Engines Switch Across Regimes

- 16 Why combining the three engines matters

- 17 Proprietary Signal Calibration

- 18 Trend-Long Engine Instruments

- 19 Mean-Reversion Engine Instruments

- 20 Trend-Short Engine Instruments

- 21 Why every signal is taken

- 22 How to tell curve fitting from a real edge

- 23 How the strategy was developed

- 24 What this does not prove

- 25 Your account, your custody

- 26 Signal delivery

- 27 Execution

- 28 Non-discretionary by design

- 29 Risk level is fixed, exposure scales with investment amount

- 30 Cash-account structure, no broker margin

- 31 Minimum investment

- 32 General Advice Warning

- 33 Hypothetical and Out-of-Sample Performance Disclosure

- 34 Portfolio Construction

- 35 Commissions and Fees

- 36 Past Performance

- 37 Leveraged Instruments

- 38 No Guarantee

- 39 About MFAM

Historical Performance

The strategy has been run against historical market data covering May 2017 through end-2024. From the start of 2025 onward the strategy has been running forward out-of-sample, with results accumulating on the same rules and all parameters frozen. Over the combined period the equity curve has meaningfully outpaced a passive S&P 500 investment.

Returns shown are already net of strategy fees

Every figure on this page — the +4,360% total return, the 52.6% CAGR, the equity curve, the per-year results, the parameter sweeps, all of it — is calculated net of an all-in 30 basis-point per-fill charge applied to every entry and every exit. The 30 bps is the actual per-fill figure, not a conservative placeholder. It bundles the MFAM management commission, broker commissions, bid-ask spread, and realistic execution slippage on the underlying leveraged ETFs into a single number. There is no separate management fee, performance fee, or platform fee layered on top of the figures shown. Exchange and regulatory pass-through fees, which a broker always charges on top of commissions, sit outside this 30 bps figure, as does tax, which depends on the investor's individual circumstances. The headline performance is materially above the S&P 500 with this real per-trade drag already applied to every fill in the calculation.

The strategy outperformed the benchmark in 10 of 10 calendar years. Outperformance years averaged roughly +34 percentage points above SPY. The asymmetry reflects the system's design preference for capturing large directional moves rather than tracking the benchmark closely.

Context for this period

Long-run S&P 500 returns sit somewhere in the 7 to 10 per cent range depending on the period measured, averaging around 10 per cent in nominal terms over the past century. That average is made up of decades that look very different from each other, including the 1929-32 crash, the 1968-82 stagflation decade, the 2000-02 dot-com bust and the 2008 global financial crisis.

The May 2017 to April 2026 window has not looked like those decades. It has instead been one of the strongest stretches the benchmark has ever had, with a 14.8 per cent annualised return driven by uninterrupted tech-led leadership, fast recoveries from the 2020 COVID shock and the 2022 inflation shock, and an artificial-intelligence-driven capex cycle from 2023 onward. The benchmark is running well above its long-run average and sets a high bar.

The strategy outperformed that elevated benchmark by a meaningful margin. Historically, the strategy's edge has been largest in years with strong directional moves in either direction, including the 2017 and 2019 tech-led bull runs, the 2021 reopening, the 2024 AI-led extension, and notably 2022 where the trend-short engine and mean-reversion engine produced positive returns through a year that took the S&P 500 down 18 per cent. The single underperformance year was 2018, where the February volatility spike and the Q4 sell-off produced a -5.1 per cent strategy result against -4.6 per cent for the benchmark, a half-percentage-point gap. The structural edge is expected to be at least as visible against a long-run benchmark closer to the 7 to 10 per cent average than it has been against the far stronger backdrop of the past decade.

No guarantee is made about future performance. The point is that the strategy has beaten a very hot benchmark. A cooler benchmark is historically where this type of system has had its best relative years.

To step through the mechanics, the paperwork to open the Interactive Brokers account, and whether the strategy is a fit for your portfolio, book a callback with an MFAM adviser. The administrative side of running the strategy, including custody, signal delivery and adviser execution, is set out in How You Access the Strategy at the bottom of this page.

Request a CallbackPrefer to learn the fundamentals first? Access the free trading course.

Alpha vs Beta

The first number most investors look at is return. A 52.6 per cent annualised strategy looks objectively better than a 14.8 per cent benchmark. The raw comparison hides something important though, because not all returns are created equal. A strategy's return number has to be broken down into two very different components before it can be judged honestly.

Beta is market exposure

Beta is the portion of a strategy's return that comes from simply being in the market. If the S&P 500 goes up 10 per cent in a year, a fully invested portfolio that moves in lockstep with it will also go up roughly 10 per cent. Nothing clever happened. The market rose, and the investor was along for the ride. Anyone willing to press a single buy button on an index ETF can collect beta. It requires no analysis, no timing, and no discipline.

Critically, beta is not free. It comes with full participation in market losses. The same passive portfolio that captured the upside will sit through the full drawdown when the market falls. The investor has no defence against a bear market. They accept whatever path the market delivers, peaks and troughs alike.

Alpha is return that does not come from market exposure

Alpha is what is left over after the beta portion has been accounted for. It is the portion of return that reflects a genuine edge, whether from timing, selection, risk management, or all three. Alpha is what separates an active strategy from a passive one. When a strategy delivers return that cannot be explained by market exposure alone, and delivers it with risk characteristics that are measurably different from a passive market allocation, that is alpha.

The distinction matters because alpha and beta are valued very differently. Beta is effectively free, available for a fraction of a per cent in management fees through any index ETF. Alpha is scarce, because it requires a source of edge that most market participants do not have. Decades of academic and industry research confirm that consistent alpha is rare, and strategies that can demonstrate it are treated as materially different from strategies that simply ride the market higher.

How this strategy generates alpha

This strategy's outperformance versus the S&P 500 is not a function of taking more market risk. The opposite is true. Over the May 2017 to April 2026 window it produced a 52.6 per cent annualised return against 14.8 per cent for the S&P 500, while simultaneously containing its maximum drawdown to 29.5 per cent versus 33.7 per cent for the benchmark. Higher return and lower drawdown at the same time. That combination cannot be produced through beta alone. More market exposure would have produced deeper drawdowns, not shallower ones.

The strategy uses rules to pull capital out of the market when conditions no longer support its trading signals, and to deploy capital more aggressively when conditions do. A passive buy-and-hold investor has no mechanism to do either. They are always fully exposed regardless of whether the environment is favourable. The strategy's alpha comes from this selective exposure, applied mechanically by the rules rather than discretionarily by a human. The risk-adjusted metrics in the next section are where this alpha becomes visible, and the regime filter that drives the selective exposure is explained further down the page.

Risk-Adjusted Return Profile

Raw return alone understates the quality of the strategy. The cleanest way to judge a leveraged trading system is to compare its return per unit of worst-case drawdown taken to get there.

The Calmar ratio is the headline metric for this type of system. It divides the annualised return by the worst peak-to-trough drawdown across the period, which captures the question every leveraged-ETF investor should be asking, namely how much return was extracted for each unit of drawdown actually experienced. The strategy's Calmar of 1.78 is roughly four times the S&P 500's 0.44 over the same window. Headline CAGR is 52.6 per cent versus 14.8 per cent, and despite trading two- and three-times leveraged instruments the strategy's worst drawdown was -29.5 per cent against -33.7 per cent for the benchmark. The drawdown containment is what makes the higher CAGR meaningful, because a leveraged strategy that produced the same return through deeper drawdowns would not be the same product.

Sharpe and Sortino ratios are not the most useful metrics for this kind of system. Both measure variance around the mean, and a leveraged-ETF strategy is engineered to produce large positive moves on the days the underlying setups fire. Those large moves are exactly what the strategy exists to capture, and ratios that penalise them on a daily basis understate the quality of an outcome that is best judged on a peak-to-trough basis. The strategy's Sharpe ratio over the period was 1.226 and its Sortino was 1.321, both shown alongside Calmar in the chart above, but the headline question for a strategy of this design is whether the return justifies the worst drawdown taken, which is what the Calmar plus the drawdown chart together describe.

The mechanical source of the alpha is an intentional reduction in market exposure during adverse regimes, combined with an opportunistic short-side engine that profits when the long side is in cash. That regime-shifted exposure is what produces a higher return at a shallower drawdown, and is covered in the engine and regime filter sections below.

Why Maximum Drawdown Is the Number That Actually Matters

Most investors focus on return. Experienced investors focus on drawdown, because the arithmetic of recovery is unforgiving. A loss and a subsequent gain of the same percentage do not cancel out. The deeper the drawdown, the more disproportionate the recovery required.

A 50% drawdown requires a 100% gain to break even, which at historical equity market return rates takes roughly seven years. A 75% drawdown requires a fourfold return and realistically may never be recovered within an investor's remaining time horizon. Drawdown is not just a number on a chart. It is a direct tax on future compounding, and in severe cases it ends the compounding journey entirely.

The gap between the strategy's -29.5% maximum drawdown and the S&P 500's -33.7% may look modest at first glance, but the relevant comparison is not the headline drawdown number alone. The strategy delivered a 52.6 per cent annualised return for that drawdown, against a 14.8 per cent return for a benchmark that took an essentially identical drawdown. Per unit of worst-case drawdown experienced, the strategy compounded capital roughly three times faster. The Calmar ratio is the formal expression of that, and it is the metric that matters most for a leveraged-ETF system because it answers the question every drawdown-aware investor is asking, namely how much return was actually extracted for each unit of pain endured.

This is also why any strategy quoting strong headline returns should be scrutinised for the path it took to get there. Large returns achieved through large drawdowns are statistically fragile. The strategy here is engineered to contain the left tail first and let returns compound as a consequence, rather than the other way around.

Plotted continuously, the strategy's drawdown profile sits above the S&P 500's through most of the period. The worst trough was -29.5% versus -33.7% for the benchmark, and recovery back to new highs tends to happen faster. The 2020 COVID shock and the 2022 bear market are both visibly shallower and shorter for the strategy, which is the regime filter cutting exposure during adverse periods, and the trend-short engine taking over when long-side conditions deteriorate.

Historical Drawdown Events in Context

A feature of a leveraged-ETF strategy worth understanding is that its largest drawdowns tend to follow its largest rallies. The two- and three-times leveraged instruments produce outsized spikes during favourable regimes, and any subsequent consolidation is measured against that spike. The headline drawdown number reflects how much of a prior rally was given back, not how much base capital was put at risk.

A second, structural driver of these events is the trend engines' own design. Trend-following systems, on either the long or short side, are built to capture the bulk of a sustained move, not to exit at the top. The exit signal requires confirmation that the trend has weakened, which by construction occurs after the peak rather than at it. That confirmation requirement is precisely what allows the system to ride extended trends without being shaken out by mid-trend pullbacks. The cost of that patience is that a portion of the final leg of every trend is given back before the exit fires. This giving-back is not the strategy failing. It is the engine behaving exactly as designed, accepting a few percentage points of peak-to-exit slippage in exchange for the ability to hold winning positions through long, profitable runs. A trend system optimised to avoid give-back would also exit much earlier in its winners, sacrificing the tail of outlier returns that the long-tail distribution depends on.

Across May 2017 through April 2026 the strategy experienced six completed peak-to-trough drawdowns of 20% or more, with a seventh event currently in recovery. The cluster between -20.9% and -29.5% is consistent across regimes, which is the operating point the volatility-adjusted stops and per-leg risk caps are calibrated to enforce. No completed drawdown extended materially beyond the design envelope, and no event left the curve below an earlier trough by a meaningful margin. The strategy's all-time maximum drawdown of -29.5% sits inside event 4, the March 2022 rate-hike sell-off. Each red band on the chart marks one event with its peak-to-trough magnitude on the upper badge, and each green arrow marks the trough-to-new-high recovery that followed. The numbers below the chart correspond to the badges on each event.

Each event follows the same pattern. A sustained rally produces a new all-time high, the underlying ETFs reach a regime inflection, and the strategy gives back a portion of the unrealised gain before the regime filter, the volatility-adjusted stops or the trend-short engine cut net exposure. Each drawdown is substantial in peak-to-trough terms, but the magnitude clusters in a tight band rather than running away to deeper levels. That tightness is the signature of a working risk-control framework.

- 2018 Volmageddon and rolling 2018 sell-off (-27.8%, peak Jan 2018, trough Feb 2019, recovered Apr 2019). The February 2018 volatility spike, the Q4 2018 equity drawdown and the early-2019 trough rolled into one extended event lasting roughly thirteen months. Volatility-adjusted stops exited long positions through each leg as realised vol expanded. The strategy compounded +104% from this trough to its next all-time high in January 2020.

- Q1 2020 COVID crash (-25.3%, peak Jan 2020, trough Feb 2020, recovered Jul 2020). An extremely rapid sell-off across global equities. The trough was reached in just six weeks. Volatility-adjusted stops exited losing positions and the trend-short engine took over as net exposure rotated. The strategy compounded +60% from this trough to its next all-time high in September 2020.

- Q3 2020 post-COVID retrace (-20.9%, peak Sep 2020, trough Sep 2020, recovered Nov 2020). A sharp three-week consolidation through the September 2020 US election period after a multi-month rally. The October trough sat well above the April 2020 trough, illustrating the strategy compounding through cycles. The strategy compounded +227% from this trough to its next all-time high in March 2022.

- 2022 rate-hike sell-off (-29.5%, peak Mar 2022, trough Apr 2022, recovered Aug 2022) — the all-time MDD. The first major leg of the Fed tightening cycle. This is the deepest drawdown in canon history and sits exactly at the design envelope. The trend-long engine moved to cash, the trend-short engine took over net exposure, and the book recovered to a new high inside five months. The strategy compounded +73% from this trough to its next all-time high in October 2022.

- Late 2022 to early 2023 banking stress (-22.0%, peak Oct 2022, trough Mar 2023, recovered May 2023). A persistent grind down through the back half of 2022 and into the March 2023 Silicon Valley Bank failure and regional-bank stress episode. The directional engines rotated as macro regime signals shifted. The strategy compounded +243% from this trough to its next all-time high in May 2024 — the largest run-up in canon history.

- Mid-2024 to late-2024 chop (-26.8%, peak May 2024, trough Nov 2024, recovered Apr 2025). A persistent rotation between mega-cap leadership and broader-market participation through US election year. The directional engines whipsawed before the trend-short engine and mean-reversion engine stabilised the book. The strategy compounded +121% from this trough to its next all-time high in October 2025.

- Q4 2025 to Q1 2026 sell-off (-25.7%, peak Oct 2025, trough Mar 2026, recovery in progress). A two-stage sell-off driven by escalating Iran and Middle East conflict, renewed tariff and policy uncertainty, and a sharp momentum unwind through February and March 2026 where the S&P 500 fell 8.9% in four weeks and the underlying leveraged ETFs fell 33 to 43% peak to trough. Sits well within the design envelope and is the most recent of the seven events.

The honest caveat

An investor whose capital enters right at a peak experiences the full peak-to-trough drawdown because their starting NLV is that peak. The compounding visible across the nine-year curve is a product of staying invested through multiple cycles, not of how the strategy behaves in any individual event. Anyone deploying capital into the strategy should size their exposure against the ability to tolerate a 28 to 30 per cent drawdown from the day they enter, which is the design operating range the system is engineered to contain to.

Frequent but Bounded — the Diversification Fingerprint

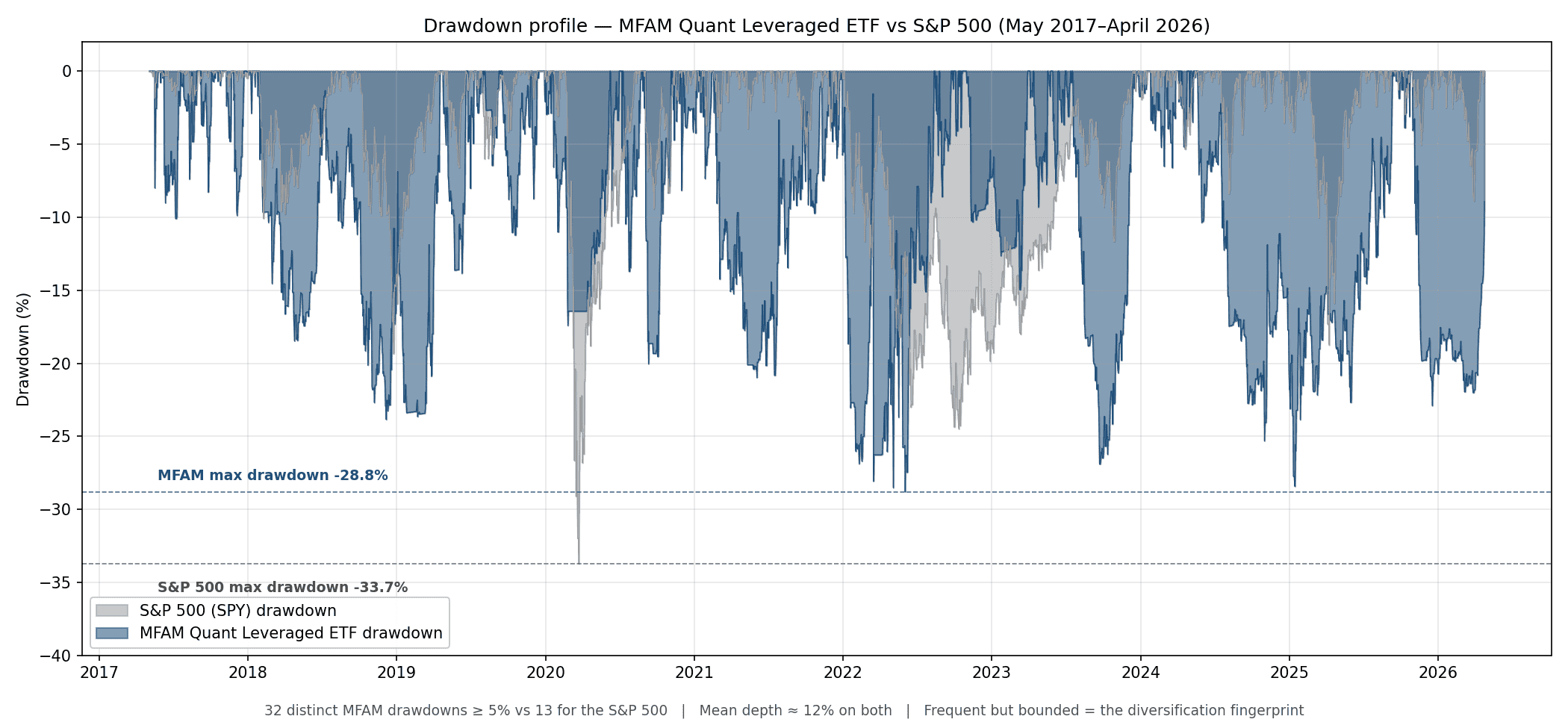

If the maximum drawdown number tells you how deep the worst event was, the drawdown profile tells you how the strategy actually feels to live with day to day. The picture below plots the strategy's drawdown against the S&P 500 over the same nine-year window.

Two things stand out. First, the strategy is in some kind of drawdown roughly nine days out of every ten — there are thirty-two distinct drawdown events of five per cent or deeper across the window, against thirteen for the S&P 500. Second, despite that frequency, the deepest drawdown is shallower than the S&P's: -29.5 per cent versus -33.7 per cent.

That combination is the diversification fingerprint of the strategy. Thirteen leveraged instruments running across three engines means new local peaks are reached constantly, which means small recedes from those peaks happen constantly. The strategy is almost never sitting on an all-time high. But the same diversification means no single engine's bad month can drag the whole book past the design envelope of around thirty per cent. The drawdowns are frequent, but they are bounded.

This profile has a side-effect on the headline statistics. The strategy's Sharpe ratio of 1.226 is good but not extraordinary, because Sharpe penalises every move equally — including the large up days that drive the compounding. The Calmar ratio of 1.78, which only cares about how deep things ever got, is closer to four times the S&P's. The Calmar gap is the more honest read for a strategy of this design, because it captures the asymmetry: shallow drawdowns paired with hard up moves.

To step through the mechanics, the paperwork to open the Interactive Brokers account, and whether the strategy is a fit for your portfolio, book a callback with an MFAM adviser. The administrative side of running the strategy, including custody, signal delivery and adviser execution, is set out in How You Access the Strategy at the bottom of this page.

Request a CallbackPrefer to learn the fundamentals first? Access the free trading course.

How It Works

The strategy runs three engines in parallel, each trading different signals across different instruments. The three engines are designed to work in different market regimes so that the strategy as a whole has exposure to trending up, trending down, and mean-reverting environments. The drawdown containment shown above is not an accident, it is the direct mechanical consequence of how the three engines are designed to switch leadership across regimes.

Trend-Long Engine

Identifies established uptrends using a combination of momentum indicators, moving-average filters, and a regime-detection layer. The engine stays out of the market when broader conditions do not support long-side trend-following. Exits are driven by volatility-adjusted trailing stops that tighten as a trade moves in favour.

Mean-Reversion Engine

Identifies short-term oversold conditions in volatile sectors and takes positions sized to capture rebounds. Exits again use volatility-adjusted stops, with discipline around capturing the first material reversion move rather than holding for extended trends.

Trend-Short Engine

The mirror image of the trend-long engine, applied to the inverse leveraged ETFs of the same underlying indices. It activates when sustained downtrends are confirmed by the regime layer, allowing the strategy to extract return from extended sell-offs rather than sitting in cash through them. Exits use the same volatility-adjusted stop framework as the trend-long engine.

Three Engines, Three Trade Profiles

The three engines produce very different trade shapes. The two trend engines are designed to catch extended directional moves and hold them through a sustained leg, which typically means multi-week to multi-month hold times. The mean-reversion engine is the opposite, entering after a short sharp dislocation and exiting as soon as price snaps back, often within a few days. The performance of the strategy is driven by the distribution of outcomes across hundreds of trades rather than any single winner.

How the Three Engines Switch Across Regimes

The three engines have substantially uncorrelated exposure patterns. When long-side trend conditions are poor, the regime filter pulls the trend-long engine into cash. When short-side trend conditions activate, the trend-short engine takes over net exposure. The mean-reversion engine fills in when neither trend regime is dominant. In practice, leadership rotates depending on what the market is doing.

The 2018 Q4 sell-off and the 2022 rate-hike bear are the clearest illustrations. The trend-long engine was held in cash for extended periods through both, while the trend-short engine carried directional exposure on the way down and the mean-reversion engine captured the intervening relief rallies. In strongly trending bull years such as 2019, 2021 and 2024, the trend-long and mean-reversion engines run concurrently at high exposure while the trend-short engine sits in cash.

The mechanical consequence of this rotation is that the strategy is rarely flat across all three engines, and is also rarely fully exposed across all three at the same time. Net market exposure adapts to the current regime rather than running at a fixed level. This is the structural reason the strategy's maximum drawdown over the historical period was -29.5% versus -33.7% for the S&P 500, despite trading two- and three-times leveraged instruments. The regime filter and the trend-short engine together remove or reverse capital exposure when the environment does not support the long-side signal. Drawdown containment, and the alpha that comes with it, follows as a side effect of that discipline.

Why combining the three engines matters

Running only a trend-long strategy exposes an investor to years-long periods of poor performance when markets stop trending up. Running only a mean-reversion strategy leaves alpha on the table during extended bull runs. Running only a short-side strategy is structurally negative-carry against rising long-run equities. Combining all three gives the strategy something to do in every regime, which is why the combined results are meaningfully smoother than any engine alone.

Proprietary Signal Calibration

All three engines use a proprietary approach to signal tuning, allowing faster regime detection than conventional moving-average or crossover methodologies. This responsiveness is a core part of the edge and comes from extensive quantitative research rather than any single technical indicator.

For a plain-language walkthrough of the trend, mean-reversion, regime-filter and volatility-stop concepts this strategy is built on, access the free MFAM trading course.

Instrument Selection

The strategy trades thirteen leveraged exchange-traded funds, five on each trend engine and three on the mean-reversion engine. Instrument selection is deliberate and follows three principles. The first is that each instrument must sit in a market segment whose behaviour matches the engine trading it. Trend legs are placed in markets with a history of long, persistent directional moves. Mean-reversion legs are placed in sectors where sharp dislocations reliably snap back. The second principle is non-overlapping exposure. Each leg tracks a distinct sector or geography, so an adverse event in one does not cascade across the book. The third is liquidity. Every instrument is a top-tier ETF with deep order books, so fills are reliable and position sizing is not constrained.

Leverage is used intentionally. The two- and three-times-leveraged structure amplifies every signal, allowing the system to extract meaningful return from moves that would be uneconomic to trade in unleveraged form. The same leverage is what makes stop-loss discipline and regime filtering non-negotiable, and the strategy is engineered around that requirement.

Trend-Long Engine Instruments

US large-cap technology has produced some of the longest and cleanest trends of any major equity index over the past decade. The constituents are dominated by globally scaled businesses whose earnings trajectories unfold over quarters and years, not days, which gives a trend engine meaningful runway once a move is established.

The semiconductor cycle is one of the most structurally trending sectors in global equities. It combines a multi-year secular growth layer, driven by compute demand, artificial intelligence and industrial electrification, with a cyclical inventory cycle that creates sustained directional moves in both directions. Trend-following captures both.

Chinese equities move on a different set of drivers than US markets, including domestic policy cycles, stimulus rounds and property-sector dynamics. This non-US exposure gives the trend-long engine an independent opportunity set. When US markets stall, Chinese markets may be trending separately, and the system can capture that without taking additional US risk.

The artificial-intelligence and infrastructure build-out is the dominant multi-year capex theme in US equities, and the basket sitting underneath AIBU captures that flow at the index level rather than at any single name. Earnings revisions across the cohort move in the same direction for quarters at a time when capex rolls forward, which is exactly the kind of persistent direction the trend-long engine is designed to ride.

Biotech is one of the most idiosyncratic and headline-driven sectors in US equities, with binary clinical-trial outcomes, FDA decisions and acquisition flow producing sharp and sustained moves. The same drivers that pin the sector to multi-month sideways action also produce extended trending runs once a cycle establishes, whether through sustained M&A activity, pipeline progress across the cohort, or capital rotating back into small-cap biotech after a long drought. The trend-long engine is built to ride those phases when they fire.

Mean-Reversion Engine Instruments

Defense names move sharply on geopolitical headlines, defense-budget cycles and programme-specific news. The underlying thesis, sustained government spending, is structurally stable, so short-term dislocations caused by headlines tend to reverse quickly. That pattern is a textbook setup for a mean-reversion engine.

Energy is one of the most volatile sectors in US equities, with sharp moves driven by oil-price shifts, OPEC decisions and geopolitical events. The sector's underlying earnings power is anchored by large integrated producers whose long-term economics do not turn on a single headline, so the short-term overreactions reliably fade. That gives the mean-reversion engine a high-quality flow of dislocation-and-snapback setups.

Junior gold miners run on operating leverage to the gold price. A small move in the underlying commodity produces an outsized move in the equity, and those moves frequently overshoot in both directions on macro headlines, real-yield prints and dollar swings. The fade back to fair value is reliable enough to be a textbook reversion setup, and JNUG carries the additional property of being structurally uncorrelated with the rest of the book, since gold-miner pricing is driven by real rates and the dollar rather than by US earnings or Chinese policy.

Trend-Short Engine Instruments

The inverse counterpart to TQQQ. When the regime filter confirms a sustained downtrend in US large-cap technology, the trend-short engine takes a position in SQQQ to capture the move. The same momentum and volatility-stop framework applies, mirrored to the short side of the same underlying index.

The inverse counterpart to SOXL. Semiconductor sell-offs are typically as sharp and persistent as semiconductor rallies, driven by the same cyclical inventory dynamics in reverse. The trend-short engine captures these legs when the regime filter confirms the downtrend.

The inverse counterpart to YINN. Chinese equity sell-offs driven by policy tightening, property-sector stress or geopolitical events tend to be sustained and offer extended trend-short opportunities that are largely uncorrelated with US-side directional moves.

The inverse counterpart to AIBU. AI-thematic drawdowns in 2022 and the mid-2024 chop both ran for months once they were established, driven by the same capex-cycle dynamics in reverse. The trend-short engine takes AIBD when the regime filter confirms the downtrend, applying the same volatility-stop framework as the long-side engine.

The inverse counterpart to LABU. Biotech sell-offs run for the same reasons biotech rallies do, often in extended drawdowns when capital rotates out of small-cap risk. The trend-short engine takes LABD when the regime filter confirms the downtrend, applying the same volatility-stop framework as the long-side engine.

Every Trade Matters

Quantitative strategies rely on statistical discipline. The distribution of individual trade outcomes over the backtest period demonstrates why taking every signal, without filtering, is a structural requirement.

Trade outcomes follow a long-tailed distribution. Most trades produce small positive or small negative returns, clustered near the mean, with a long right tail of outsized winners. The win rate across the period was approximately 54 per cent.

Equally important is what happens on the left-hand side of the distribution. Most losing trades sit between zero and minus ten per cent, and the ten worst losses all sat between -26 and -42 per cent on the underlying leveraged ETF. None of the ten worst trades exceeded the -42 per cent floor that the volatility-stop and per-leg risk-cap framework is engineered to enforce. There is no single catastrophic blow-up trade that unwound prior gains. The left side of the distribution is bounded by the risk-control framework rather than by chance.

Two risk controls produce this shape. The first is stop-loss discipline. Every position carries a volatility-adjusted stop that tightens as a trade moves in favour, so losing trades are exited mechanically at a pre-defined loss rather than allowed to deteriorate. The second is per-leg risk sizing, which targets a fixed dollar-risk budget per trade based on each instrument's recent realised volatility. High-volatility instruments receive smaller position sizes so that the dollar loss at the stop is consistent across the book. Combined, these two controls are what allow the strategy to run leveraged instruments without carrying catastrophic single-trade risk, and are the reason the left tail of the distribution stays bounded.

Why every signal is taken

The strategy takes every signal as it fires, without discretionary filtering. Long-tailed distributions reward consistency, because the largest winners cannot be reliably identified in advance. Skipping setups that look low-conviction would risk passing on the very tail trades that compound the curve, so the rules-based discipline is to act on every signal and let the distribution do the work. Hold-time profile reinforces this. The trend engines hold winners for weeks to months, the mean-reversion engine cycles within days, and the diversity of trade shapes is what makes the combined return path smoother than any single engine alone.

To step through the mechanics, the paperwork to open the Interactive Brokers account, and whether the strategy is a fit for your portfolio, book a callback with an MFAM adviser. The administrative side of running the strategy, including custody, signal delivery and adviser execution, is set out in How You Access the Strategy at the bottom of this page.

Request a CallbackPrefer to learn the fundamentals first? Access the free trading course.

Is This Just Curve Fitting?

A fair question to ask of any backtested strategy is whether the performance is real or whether the rules have been retro-fitted to the data until the equity curve looks good. This is called curve fitting, and it is the single most common failure mode of quantitative research. A curve-fit strategy will show a beautiful historical track record and then fall apart the moment it is asked to trade on data it has not seen. The numbers on the chart were engineered into existence, not discovered.

Curve fitting is easy to do accidentally. Any strategy has parameters, and any parameter can be tuned until the historical result is maximised. If you try enough combinations on the same dataset, something will fit that dataset almost perfectly by coincidence alone. The fit says nothing about the future because the rules were selected for that specific history, not for any underlying market behaviour. A properly engineered quantitative strategy has to be built in a way that makes this kind of overfitting structurally difficult.

How to tell curve fitting from a real edge

The cleanest test is a parameter sweep. Take the finished strategy, vary its core parameters over a wide range, and plot every resulting equity curve. If the strategy's performance collapses when the parameters move even slightly, the historical result was a lucky coincidence at one specific parameter setting. If the curves instead form a tight cluster, with every variant producing similar shape and similar ending value, the edge lives in the underlying rules, not in the specific numbers chosen. The parameter sweeps shown below were generated on the original six-leg development build of the strategy, which contains the trend-long and mean-reversion engines tested in isolation before the trend-short engine and the additional leveraged ETFs were folded into the deployed configuration. They are shown to demonstrate parameter-stability methodology and are not intended to reproduce the headline performance figures above, which are calculated on the full thirteen-leg deployed configuration.

A well-built strategy has to be robust on both sides of every trade, the entry and the exit. Sweeping only one side leaves the other side unexamined. Both sides are tested independently below.

Exit-side robustness, the volatility-stop sweep

The exit side of the strategy is governed by volatility-adjusted stops. Each position is given a stop whose distance scales with how noisy the underlying instrument currently is, so a calm market produces tight stops and a noisy market produces wider ones. The question is whether the edge depends on the specific stop multiples chosen, or whether it holds across a range of reasonable values.

Every one of the twenty-five exit-parameter combinations beats the S&P 500 by a meaningful margin, and every curve follows the same overall shape through every drawdown and recovery. The cluster is tight, with ending values sitting in a narrow band relative to the total compounded return. There is no single knife-edge volatility-stop setting propping up the result. The exit logic works across the full plausible parameter space.

Entry-side robustness, the signal-length sweep

The entry side of the strategy is governed by lookback-window signals on each engine. Each signal has a lookback window that controls how sensitive it is. A shorter window produces more entries and more noise. A longer window produces fewer entries but waits for more confirmation. The second sweep varies the trend and mean-reversion lookbacks independently to test whether the edge survives different signal sensitivities.

Again, every combination beats the benchmark by a meaningful margin and every curve retains the same overall path. The entry logic is not hanging on one specific lookback value. Whether the lookback windows are shorter or longer than the deployed configuration, the strategy still produces a similarly shaped equity curve ending materially above the S&P 500. Entry and exit are independently robust, which is the stronger test because it rules out the possibility that one side of the system is carrying the other.

Plateau selection, why the deployed setting is not the peak

A common and valid question when looking at a parameter grid is whether the deployed configuration was simply picked as the highest-performing cell. If the development process had done that, the deployed setting would be sitting at the top of a narrow spike, and any small move away from it would collapse performance. That is the curve-fitting failure mode a sweep is designed to detect.

The selection method used here was different. The grid was inspected across multiple planes, with return stability and drawdown stability both weighing equally into the decision. The goal was not to find the single best cell on any one metric. It was to find a cell whose neighbours produced similar returns and similar drawdowns, so that a small parameter miscalibration in deployed trading would not change the character of the results. Both planes were considered together, and the deployed setting was chosen from the region where the neighbourhood was consistent on both.

The heatmap below shows the profit factor plane of the same 5 by 5 entry-parameter grid, with the actual parameter values anonymised as x and y. Profit factor is the ratio of gross profits to gross losses, so any cell above 1.0 is net profitable. It is the cleanest way to visualise whether the edge survives away from the deployed configuration.

The key feature is that every cell in the neighbourhood is green. Profit factor ranges from roughly 1.45 to 1.59 across the entire grid. A curve-fit strategy would show one green cell surrounded by red, with profitability collapsing as soon as any parameter moved. Instead, the whole neighbourhood is comfortably profitable. The edge is not sitting on a knife-edge parameter choice, it is being generated by the underlying rules and survives in every direction around the deployed setting.

The same broad stability shows up on the max-drawdown plane when it is inspected alongside this one. Drawdown is not uniform across the whole grid, but it clusters tightly in the region the deployed configuration was picked from, which is why both planes were weighed together at selection time rather than either one in isolation.

How the strategy was developed

The development process itself was designed to resist curve fitting, in three deliberate stages.

Stage one was building the base logic on unleveraged instruments. The trend and mean-reversion engines were designed and tested against one-times ETFs tracking the same underlying exposures, where signal behaviour is cleaner and leverage-related decay does not distort the data. The goal in this stage was to establish whether the underlying trading rules captured real market behaviour, independent of any amplification. If the rules did not work on the unleveraged instruments, they were not going to work anywhere.

Stage two was validation on data the rules had never seen, using both a backward and a forward out-of-sample test. The engines were built and their parameters chosen on the 2019 to 2022 window. Once the base logic performed on that development set, it was tested in two directions. Going backwards, the rules were run against the May 2017 to end-2018 window, which predates the build window and had never been seen during development. Going forwards, the rules were run against the 2023 to 2024 window, which had been deliberately held out of the build. If the rules had been curve-fit to the development data, performance on either out-of-sample window would have fallen apart. It did not, which is what gave confidence that the edge was structural rather than accidental.

Stage three was calibrating for the two- and three-times leveraged instruments actually deployed. Leverage changes the risk profile materially. During the 2023 to 2024 forward out-of-sample window, the volatility-stop multiples and regime-filter thresholds were re-tuned to accommodate the faster, larger moves that leveraged ETFs produce. This calibration did not change the underlying logic. It adjusted the risk-taking envelope so the same rules would operate safely on instruments that amplify every move by a factor of two or three. The rules themselves were not refit to the 2023 to 2024 data, only the risk-sizing parameters were recalibrated for leverage.

This staged process is why both parameter sweeps fan out tightly rather than collapsing when the parameters are varied. The underlying logic was validated before leverage-specific tuning was applied, so the strategy is not reliant on any specific choice of entry length or stop multiplier for its edge.

What this does not prove

A clean parameter sweep and a disciplined development process do not guarantee future performance. What they do is rule out the most common reason backtested strategies fail when deployed forward, which is that the rules were fit to the history rather than discovered from it. The edge here rests on the behaviour of the instruments and the market regimes traded. If those behaviours change materially, the strategy will change with them. What the sweep shows is that within the historical window, the edge was not an artefact of parameter choice.

Current Status

Performance figures on this page are a combination of two periods. The May 2017 to end-2024 portion is historical simulation constructed from actual market data using the strategy's current rules. The 2025 portion onward is the strategy running forward out-of-sample, with results accumulating on the same rules and all parameters frozen. Both segments are shown continuously on the same equity curve, with a marker indicating the boundary between the historical-simulation and forward-out-of-sample periods.

To step through the mechanics, the paperwork to open the Interactive Brokers account, and whether the strategy is a fit for your portfolio, book a callback with an MFAM adviser. The administrative side of running the strategy, including custody, signal delivery and adviser execution, is set out in How You Access the Strategy at the bottom of this page.

Request a CallbackPrefer to learn the fundamentals first? Access the free trading course.

How You Access the Strategy

The strategy is delivered as non-discretionary general advice. MFAM generates the signals and, once the investor authorises each one, the MFAM adviser places the order on the investor's behalf. The mechanics below are how that works in practice.

Your account, your custody

The investor opens their own account at Interactive Brokers. The account and all cash deposits are legally held by the investor, with client cash sitting in Interactive Brokers' segregated trust account under Australian and United States client-money rules. MFAM does not hold, pool, or control investor funds at any point. The account is yours, the money is yours, and you can close the account or withdraw at any time without MFAM's involvement. The MFAM adviser is added to the account as an authorised adviser with trading authority only, so orders can be placed on the investor's behalf once authorised, but cash cannot be moved out of the account by MFAM.

Signal delivery

General advice trade signals are issued to the investor by email and SMS during the trading day as the rules fire. Each signal is a specific instruction, the instrument, the side, and the size expressed as a percentage of portfolio. The investor only needs to reply yes to authorise. No action from the investor means no trade.

Execution

Once the investor has authorised a signal, the MFAM adviser places the order on the Interactive Brokers account. Orders are typically placed as market-on-open for the next United States session, which executes overnight Australian time. This matches how the strategy has been modelled, so executed orders track the backtest assumptions rather than drifting away from them.

Non-discretionary by design

Every trade requires explicit authorisation from the investor before it is placed. The MFAM adviser does not have discretion to enter or exit positions without a yes from the investor. This is general advice with client-authorised execution, not a managed account and not a managed fund. The investor decides whether any given signal is actioned, and can decline a signal or exit a position at any time.

Risk level is fixed, exposure scales with investment amount

The thirteen instruments used are a mix of two- and three-times leveraged ETFs, and the strategy's risk profile is fixed by its design rather than chosen by the investor. Volatility-adjusted dollar-risk parity sizing, a per-leg ceiling, and the adaptive slot count across the thirteen legs together define one risk profile that every investor receives, and that is the configuration the performance figures on this page are based on. The only dial the investor controls is how much capital to allocate to the strategy. A larger allocation produces a larger absolute swing in dollar terms, while the percentage return profile, drawdown profile, and per-leg risk budget remain the same regardless of investment size.

Cash-account structure, no broker margin

The strategy is engineered to run on an unmargined cash account. The investor's broker account holds the cash and the long ETF positions outright. There is no broker margin in use, no overnight financing or borrow cost, and no margin-call mechanic. The maximum loss on any individual trade is bounded by the position size and the volatility-stop, not by a forced-liquidation cascade triggered by a margin requirement.

The leverage that appears in the return profile comes from inside the underlying ETFs themselves. Direxion and similar issuers manage the daily rebalancing within each fund, and the investor pays for that operational service through the fund's expense ratio rather than through any borrow charge on their own account. This structure removes a class of failure mode — the gap-down forced-liquidation that ends careers in conventional margin-leveraged trading — and is the reason a strategy of this return profile can be run on a standard SMSF or retail brokerage cash account without margin-derived blow-up risk. Position-level losses on the underlying leveraged ETFs themselves remain real and material, and are managed by the strategy's volatility-stop and per-leg risk-cap framework.

Minimum investment

The strategy is offered from a minimum investment of AUD 20,000. There is no per-trade dollar minimum and no fixed monthly or platform fee. The all-in 30 basis-point per-fill charge applies on every entry and exit regardless of trade size, which works out to roughly 5 per cent per annum at the strategy's natural turnover. On a AUD 20,000 account that is approximately AUD 1,000 in annual fees, which the historical return profile is engineered to absorb. Fee specifics are discussed during the onboarding conversation with an MFAM adviser.

You have seen the full mechanics, the historical performance, the risk profile, and exactly how the account, signals and execution work in practice. The next step is a short conversation with an MFAM adviser to walk through the Interactive Brokers paperwork, sizing for your portfolio, and any questions on the system before you start.

Request a CallbackWant the fundamentals first? Take the free 5-day trading course.

General Advice Warning

The information on this page is general in nature and does not take into account your individual objectives, financial situation, or needs. Before acting on any information presented here, you should consider its appropriateness having regard to your own circumstances, and where relevant consider the Product Disclosure Statement for any financial product referred to.

Hypothetical and Out-of-Sample Performance Disclosure

Performance data presented on this page combines two segments, both of which are hypothetical. The May 2017 to end-2024 segment is backtested, constructed with the benefit of hindsight using historical market data. The 2025 onward segment is forward out-of-sample, where the same frozen rules are applied to data that arrived after the development period closed, with execution simulated at the same market-on-open fills the backtest assumes. Neither segment reflects the friction of actual real-money trading, including but not limited to execution slippage, capacity constraints, fills timing, adverse selection, changes in market structure, or changes in the behaviour of underlying instruments over time. Hypothetical performance, whether backtested or forward out-of-sample, is not an indicator of what actual trading would have produced, and should not be interpreted as a forecast of future performance.

Portfolio Construction

The backtest is run as a single shared pool across all three engines. Each of the thirteen legs is sized using a volatility-adjusted dollar-risk parity rule that targets a fixed risk budget per leg given the instrument's recent realised volatility, with a per-leg ceiling and a portfolio-level shrink-to-fit when concurrently fired signals would otherwise breach total exposure. In deployed operation the engines run on a single Interactive Brokers account so that the position-sizing base across all thirteen legs is the live portfolio NLV.

Commissions and Fees

Returns shown on this page are calculated net of an all-in 30 basis-point per-fill charge applied to the notional traded on every entry and every exit. The 30 bps is the actual per-fill figure used in the calculation, not a conservative stand-in. It bundles the MFAM management commission, broker commissions, bid-ask spread, and the realistic execution slippage of trading the underlying leveraged ETFs into a single number. There is no separate management fee, performance fee, or platform fee charged on top of the displayed returns. Exchange and regulatory pass-through fees, which a broker always passes through on top of commissions, sit outside this 30 bps figure. The strategy runs on an unmargined cash account so there are no financing or borrow costs to apply. Tax depends on the individual investor's circumstances and is not modelled in the figures shown.

Past Performance

Past performance, whether actual or hypothetical, is not a reliable indicator of future performance. Future results, including any forward out-of-sample period, may differ materially from the backtested track shown on this page.

Leveraged Instruments

The strategy trades leveraged exchange-traded funds. Leveraged ETFs carry materially higher risk than their unleveraged counterparts and can experience significant decay in volatile or sideways markets. They are not suitable for all investors and are generally inappropriate as long-term buy-and-hold investments. The strategy's rules-based approach seeks to manage this risk, but cannot eliminate it.

No Guarantee

MF & Co. Asset Management makes no representation or guarantee regarding the future performance of the strategy. Returns may be negative. You may lose capital.

About MFAM

MF & Co. Asset Management Pty Ltd holds an Australian Financial Services Licence (AFSL). This page has been prepared for general information purposes. To discuss whether the strategy may be appropriate for your circumstances, speak with an MFAM adviser.